In this post, I will share my thoughts about interview preparation process for Software Engineer (SE) position. It might be useful for those who are planning to interview with companies which employ tough algorithmic problems to select candidates. I will start with the review of my background, then briefly describe my preparation process, and, finally, provide a posteriori preparation advice.

Circulating Tumor Cells in Microfluidics

Recently we developed software package called uDeviceX for blood cells flow modeling. This software allows performing computationally efficient simulations of flow of deformable blood cells in channel geometry of arbitrary complexity. The code is written on CUDA/C++ and is targeting GPU-enabled supercomputers such as Titan at ORNL or Piz Daint at CSCS.

We used Dissipative Particle Dynamics for the fluid modeling and fluid-structure interactions. The solid walls were modeled using Signed Distance Function. We employed validated red blood cell membrane model which takes into account both elastic and viscous terms.

Although uDeviceX is not the first package where mentioned models were used, it is the most computationally efficient software and the first available online. Our work is among the finalist of the Gordon Bell Award 2015. In particular, we demonstrated perfect weak scalability on the whole Titan (18,688 KX20 GPU nodes) and good strong scalability on Piz Daint. Details about software, test cases, and performance results are published in ACM SC’15 Proceedings.

A recently published video of some simulations in microfluidics using our software:

The authors order corresponds to the contribution to the visualization. Mitsuba was used for rendering.

Curvature Flow in Curvature Space

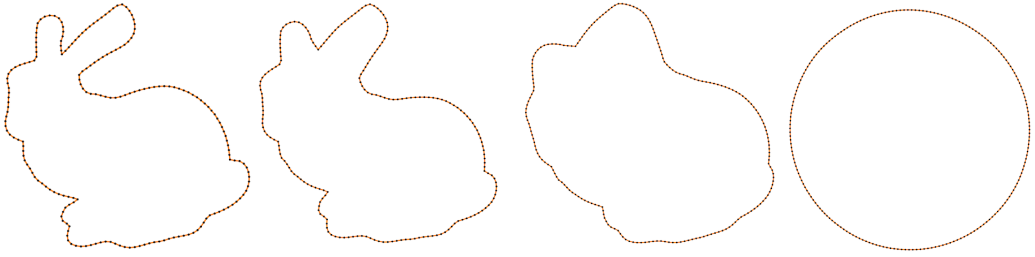

Recently I came across an amazing paper “Robust fairing via Conformal Curvate flow” by K. Crane et al. at SIGGRAH 2013 and decided to reproduce the results. The basic idea of the approach is in usage of the principal curvatures instead of vertex coordinate itself for the solution of PDE. Roughly speaking, at each iteration for every vertex the curvature is computed, than modified according to the chosen PDE, and, finally, a new position is restored out of the curvature. So for instance, you want to edit your surface or curve using Willmore flow, traditionally it is evaluated in terms of positions of vertecies themself, it involves spatial dirivatives, Laplace-Beltrami operator depending on positions. Thus the implementation is complicated and the numerical scheme requires small time steps to converge. By constrast, reformulataion of the problem in terms of curvature gives a very simple numerical scheme, which works with much bigger time steps (in current work 108 times bigger). In addition to that, this reformulation allows to preserve desired properties of the manifold (e.g. length, angle).

Crane et. al. created a general framework and applied it to 1D manifolds (curves) and 2D manifolds (surfaces). I reproduced results only for the 1D case, since 2D is much more time consuming - working with group of quaternions, half-densities, and dirac operator - is too much for a hobby project.

Seam Carving Algorithm

Intro

Seam carving is an algorithm for content-aware image resizing, it was described in the paper by S. Avidan & A. Shamir. In contract to stretching, content-aware resizing allows to remove/add pixels which has less meaning while saving more important. Pictures below demonstrate this - original picture of the size 332x480 is on the top, the picture after applying seam carving (size is 272x400) is on the bottom

This algorithm is quite impressive so one may find a lot of articles describing it. Yet as I found most of the authors haven’t read the original paper and provide a very basic implementation. In this post I will describe the algorithm with all details as it was written by Avidan & Shamir. Yet I will write from the programmers point of view, without going into Math too deep. In addition to algorithms description, I also provide Matlab code.

Level Sets With OpenVDB. Quick Introduction. Part 1

Intro

From the high-level of view level-set method (further just level set) can be considered as geometry representation, in addition to polygonal meshes and NURBS. This geometry representation simplifies solution of some problems in Computational Geometry, physical based modeling in Computer Graphics. Although level sets were first used for geometry tracking in 80s by Osher and Sethian, the main development of this method took place in the end of 90s and during 2000s.

Convert OpenNI (*.oni) Files Into Avi - Oni2avi

OpenNI is a library for work with Kinect camera. I prefer to use it instead of .net libraries from Microsoft because OpenNI is more open and, I think, it is a more natural to use it on on unix systems. OpenNI saves video and depth map obtained from Kinect camera into it’s own data format oni. Sometimes it is desirable to have avi files instead because there are a lot of code made for this format. So I wrote a simple command line converter from oni to avi data format available oni2avi. I developed it because one friend of mine who is using Matlab asked me to help him in converting oni file to avi. There is a plugin to Matlab which allows to read oni files directly but for whatever reason he could not use it. Hope, this code will be usefull for someone else. Oni2Avi needs OpenNI, OpenCV and boost. Please, read README file. In addition, you must to use a modern C++ compiler. If you use gcc, it must be 4.6 or newer. In case if you use Mac OS and macport, do the following:

1 2 3 4 5 6 7 | |

If you need any support, please write to issues, click button “New Issue” and then add a proper label - bug or question. An alternative option is to write to my email (address is written in the About section of this blog).

UPD: Since I shared this tool, I’ve got many letters with questions. Primarily from students who are doing something with Kinect. The most questions are about building oni2avi, so below are some recomendations:

- pathes for libraries and includes in the Makefile are set to the default location for the case you are using MacOS+Macport or Ubuntu+standartPackageInstaller. Thus if you have libs or includes in a different place, you need to specify where they are in the Makefile

- if you see a compilation error, please check that all pathes are correc. If you are on Ubuntu, also check whether you need -dev packages to have includes. Finally, check the version of libraries you are using. I suppose that OpenCV version >= 2.3, boost version >= 1.48, OpenNI version >= 1.53

- if you’ve found a bug, specify a version of libraries you use, command line options for the tool, and input/output files you’ve got.

OpenVDB Build on MacOS

OpenVDB is a new library by DreamWorks which contains data structures and tools for work with three-dimensional grid. For instance, it can be used to work with level-sets. On the openvdb web site it is written that it is checked to be build only on RedHat Linux, so I decided to save my experience about making it on the MacOS (Lion, 10.7.5), with gcc 4.7.2 and openvdb-v0-103-1.

Lammps Data Formats Into TecPlot ASCI Data Format

One of the TecPlot data formats is a simple ASCI format. It is deprecated but can be opened in both TecPlot and Paraview. This data format has variations so further I will use it for atoms and velocity profiles visualizations.

Tips About Building and Profiling With Cray Perftoolkit

Cray compiler generates one of the fastest code. On Cray XE6 lammps compiled by Cray Compiler(8.1.2, -O2) outperform gcc code (4.7, -Ofast) in 1.6 times. If you use Cray compiler, it has sense to use Cray’s perftoolkit for finding bottlenecks in your MPI/OpenMP application. This post is about tips about using these tools because I always forget details. I will build and analyze LAMMPS.

LAMMPS: How to Compute Fluid Viscosity

The theory is described in “Poiseuille flow to measure the viscosity of particle model fluids” by J. A. Backer et al. Below I describe how to use this approach in LAMMPS.